Episode 2.1.1

The Mandate

Episode 2: Shadows

Speakers: Main Narrator (MN). [Text in blue]

Second Narrator, synthetic female voice (SN). [Text in black]

[Written announcement against black screen]:

“This episode builds on topics from Episode 1, link in the description. Reference to these topics may also be found in the companion book The Mandate, link also in the description.”

[Fade to black]

[Sandstone shoreline at East Point, Saturna Island. MN walks towards the camera from about 100 m away. Greek text is ghosted over part of the scene.]

[SN]: “Imagine a number of captives chained from infancy inside a cave. The cave has a wide entrance and the captives are seated with their backs to it, unable to turn.

“From a distance, a bright light casts its rays onto the far wall of the cave.

“A road runs past the entrance, on which merchants carry vessels, farm animals, and other sundry items. The prisoners watch their shadows moving on the cave wall, speculating on their behaviour: Which shadow will appear next? Which is likely to follow? Which ones come together with certain other ones?

“In time, the prisoners start noticing patterns in what they see.

“One day, a prisoner is freed form the cave. He feels the discomfort of new movements and the distress of seeing things in the open light. He begins to comprehend a different world made landscapes, solid objects, stars, [slides of corresponding scenes]. He soon accepts that there is a reality higher than the one he experienced in the cave.”

[MN comes to stand before the camera.]

[MN]: “Even rephrased for brevity, these passages from Plato’s Republic still challenge us, intellectual descendants of his civilization. Like him, we continue to ask: What is reality? To what extent does it correspond to the data from our senses? Could there even be an ideal form of reality beyond what we perceive, as Plato also proposed?

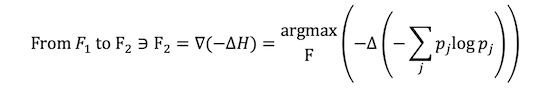

“We are about to re-enter the allegorical cave in order to examine it with the tools of the Mandate. [Brief appearance of the mandate formula against black.] From the outset, we expect to find some congruence between Plato’s outlook and ours. After all, the Mandate was just as present 2500 years ago as it is now. But how relevant can the allegory of the cave still be, and how readily can we escribe it mathematically?

“Let’s immediately spoil it: very relevant and very readily.”

[SN]: “Consider a day in which only humans and animals are transiting on the road. For ease of illustration, let us make them walk in pairs—sometimes two humans, sometime two animals and sometimes a human and an animal. [The cave glimpsed earlier is rendered in cartoon form with shadows on the wall, and with the Specific Mandate equation ghosted over part of it.]

[SN]: “Let us keep a running tally of the moving shadows by type and work out their probabilities.”

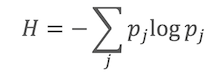

The Shannon formula separates from the ghosted Mandate and moves to a free spot on screen.

“This is the same entropy formula we met in Episode 1.

[The shadows start transiting on the wall. A table appears on another free part of the screen, tallying the shadows by kind as they appear, together with their evolving probabilities. A live box displays the changing 𝐻 value.]

Observe how the 𝐻 value updates with each new shadow. If the ratio between shadows does not change significantly over time, the entropy eventually converges to a narrow range about a mean, remaining largely unaffected by new data.

“This is the uncertainty associated with the question: What is the probability of shadow j, for 𝑗 either a human or an animal?

“Let’s freeze the current 𝐻 value and preserve it. [Value freezes and is set aside on the screen.]

[MN]: “And, what if instead of individual shadows we were to examine the occurrence of shadow groupings, as the prisoners would have done in their analysis? What would happen to the entropy? Let us try it with shadow pairs.”

[The quantity separates from the H formula and comes to the fore.]

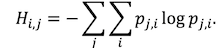

“Now the probability symbol changes to pj, representing the probability of elements j and i occurring together as a specified pair.” [On screen, pj morphs to pi,j.]

“The expression expands to 𝑝𝑗,𝑖 = 𝑝𝑗|𝑖 ∙𝑝𝑖, where the term 𝑝𝑗|𝑖, [symbol pulses larger] is the conditional probability. It addresses the question: Given that a certain shadow i appears, what is the probability of being together with shadow j? [The 'i' symbol and the shadow of a man entering the scene are simultaneously highlighted, and the words ‘given that’ appear above the man. Then the shadow of a goat enters the scene, and becomes highlighted together with the symbol j. This is repeated for other pairs, and the sums are tallied and probabilities worked out as before].

“The entropy formula for paired elements is,

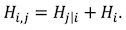

“Substituting, expanding and rearranging,

“Here, 𝐻i,j is the entropy associated with the question: What is the probability of shadow 𝑗 given shadow 𝑖?

"The joint entropy 𝐻𝑖,𝑗 is always less than or equal to the sum of the unconditional entropies 𝐻𝑖 and 𝐻j. In other words, there is less uncertainty if dependencies are taken into account. This reasoning can be extended to groups of more than two elements.

[MN is seen walking away from the shore. Cut to the inside of the car he is now driving.]

[MN]: “Much of our understanding of the world is based on joint entropy, and especially on one of its components, conditional entropy.

“Right now, I am driving towards a harbour, where I am hoping to catch the ferry back home. Usually, the ferry stays docked for 15 minutes before sailing again, and I need to get there during that interval. [Checks his watch.] I am cutting it close.

“I ask myself, ‘What is the probability of being at the dock and the ferry being at the dock at the same time?’ The probability of me being there in time is not 100 percent, and the probability of the ferry being there during the same interval is also not 100 percent. It’s a joint probability problem, similar the one Plato illustrated with that cave scenario, long before probability theories were formulated.

“Since then, we have been following the Mandate with increasing fidelity. We have implemented systems of schedules, timetables and regulations, often based on probability studies. Ferry sailings are scheduled according to the probability of high traffic; average crossing times are calculated based on the probability of adverse weather; replacement ferries are kept ready in proportion to the probability of mechanical breakdowns; and so on. All in all, it’s a system that works well.

[The car crests a rise. Below, the ferry is seen arriving at the dock. The scene picks up again with MN besides his car at dockside.]

“How did the ferry corporation arrive at this fine-tuned system?

“In essence, by first imagining what constitutes chaos for travelers: haphazard schedules, missing or unclear signs, inadequate information from unprepared staff, etc. Then, without realizing it, the corporation followed the Mandate by reducing the envisioned entropy.

“This morning, when I was planning my excursion to this island, the uncertainty in my plan was fairly low and my confidence fairly high. This was particularly true of the conditional entropy for questions like: Given that I will be at the dock at [insert time], what is the probability of boarding the ferry?

“It is easy to see how a system that minimizes entropy components makes for orderly mental states and successful outcomes.” [MN enters the car. Car is seen boarding the ferry].

[SN speaking. The words are accompanied by math animation over a view of MN on the ferry deck looking over the moving seascape.] “Interactions with environments are not limited to just two variables. The question, ‘Given that I will be at the dock on time, what is the probability of boarding the ferry?’ is nested within a long succession of implicit conditional statements, such as: ‘Given that I will be at the dock at [insert time] AND that it is a week day, AND that the sailings are on the Summer schedule, AND that I am at the intended geographic location,’ and so on. [Full mathematical development is animated against black.] “Why are such statements usually omitted? Because they have high probabilities close to 1, and thus do not contribute significantly to entropy levels. In colloquial correspondence, we would simply say that the statements are obvious and thus not worth considering.”

[MN’s car is seen exiting the ferry at Schwartz Bay. As MN monologues, the camera view alternates from the inside of the car to long shots of the car navigating through high rises.]

[MN]: “We attempt to minimize entropy with respect to questions that concern us, like: How do I find a particular address? Where is the next store? At what time does it open?

“Joint and conditional entropy aspects always accompany us, hidden in plain sight within our actions, thoughts, and intentions. To satisfy the drive to minimize entropy we even design cities arranged in grid patterns, and we establish clear rules by which to navigate them. The Mandate’s influence is everywhere we look, with order and predictability as its hallmark.

“And still the Allegory of the Cave challenges with unanswered questions. Can everything we see and attempt to analyze be just projections of something beyond our ken? Could even our familiar cities, these trees by the road, those clouds and mountains across the strait, by the equivalent of shadows without substance?

“We will explore the question in the next episode.”